大佬教程收集整理的这篇文章主要介绍了集成学习3:XGBoost&LightGBM,大佬教程大佬觉得挺不错的,现在分享给大家,也给大家做个参考。

XGBoost官方文档

XGBoost本质上还是一个GBDT,是一个优化的分布式梯度增强库,旨在实现高效,灵活和便携。Xgboost以CART决策树为子模型,通过Gradient Tree BoosTing实现多棵CART树的集成学习,得到最终模型。

XGBoost的最终模型构建: 引用陈天奇的论文,我们的数据为:$mathcal{D}=left{left(mathbf{x}{i}, y{i}right)right}left(|mathcal{D}|=n, mathbf{x}{i} in mathbb{R}^{m}, y{i} in mathbb{R}right)$

(1) 构造目标函数: 假设有K棵树,则第i个样本的输出为$hat{y}{i}=phileft(mathrm{x}{i}right)=sum_{k=1}^{K} f_{k}left(mathrm{x}{i}right), quad f{k} in mathcal{F}$,其中,$mathcal{F}=left{f(mathbf{x})=w_{q(mathbf{x})}right}left(q: mathbb{R}^{m} rightarrow T, w in mathbb{R}^{T}right)$ 因此,目标函数的构建为: $$ mathcal{L}(phi)=sum_{i} lleft(hat{y}{i}, y{i}right)+sum_{k} Omegaleft(f_{k}right) $$ 其中,$sum_{i} lleft(hat{y}{i}, y{i}right)$为loss function,$sum_{k} Omegaleft(f_{k}right)$为正则化项。

(2) 叠加式的训练(Additive Training):

给定样本$x_i$,$hat{y}i^{(0)} = 0$(初始预测),$hat{y}i^{(1)} = hat{y}i^{(0)} + f_1(x_i)$,$hat{y}i^{(2)} = hat{y}i^{(0)} + f_1(x_i) + f_2(x_i) = hat{y}i^{(1)} + f_2(x_i)$.......以此类推,可以得到:$$hat{y}i^{(K)} = hat{y}i^{(K-1)} + f_K(x_i)$$ 其中,$hat{y}i^{(K-1)}$ 为前K-1棵树的预测结果,$f_K(x_i)$ 为第K棵树的预测结果。 因此,目标函数可以分解为: $$ mathcal{L}{(K)}=sum_{i=1}{n} lleft(y{i}, hat{y}{i}^{(K-1)}+f{K}left(mathrm{x}{i}right)right)+sum{k} Omegaleft(f{k}right) $$ 由于正则化项也可以分解为前K-1棵树的复杂度加第K棵树的复杂度,因此:$$mathcal{L}{(K)}=sum_{i=1}{n} lleft(y{i}, hat{y}{i}^{(K-1)}+f{K}left(mathrm{x}{i}right)right)+sum{k=1} ^{K-1}Omegaleft(f_{k}right)+Omegaleft(f_{K}right)$$由于$sum_{k=1} ^{K-1}Omegaleft(f_{k}right)$在模型构建到第K棵树的时候已经固定,无法改变,因此是一个已知的常数,可以在最优化的时候省去,故: $$ mathcal{L}{(K)}=sum_{i=1}{n} lleft(y_{i}, hat{y}{i}^{(K-1)}+f{K}left(mathrm{x}{i}right)right)+Omegaleft(f{K}right) $$ (3) 使用泰勒级数近似目标函数: $$ mathcal{L}^{(K)} simeq sum_{i=1}^{n}left[lleft(y_{i}, hat{y}^{(K-1)}right)+g_{i} f_{K}left(mathrm{x}{i}right)+frac{1}{2} h{i} f_{K}^{2}left(mathrm{x}{i}right)right]+Omegaleft(f{K}right) $$ 其中,$g_{i}=partial_{hat{y}(t-1)} lleft(y_{i}, hat{y}{(t-1)}right)$和$h_{i}=partial_{hat{y}{(t-1)}}^{2} lleft(y_{i}, hat{y}^{(t-1)}right)$ 在这里,我们补充下泰勒级数的相关知识: 在数学中,泰勒级数(英语:Taylor series)用无限项连加式——级数来表示一个函数,这些相加的项由函数在某一点的导数求得。具体的形式如下: $$ f(X)=frac{fleft(x_{0}right)}{0 !}+frac{f^{primE}left(x_{0}right)}{1 !}left(x-x_{0}right)+frac{f^{prime primE}left(x_{0}right)}{2 !}left(x-x_{0}right){2}+ldots+frac{f{(n)}left(x_{0}right)}{n !}left(x-x_{0}right)^{n}+...... $$ 由于$sum_{i=1}^{n}lleft(y_{i}, hat{y}^{(K-1)}right)$在模型构建到第K棵树的时候已经固定,无法改变,因此是一个已知的常数,可以在最优化的时候省去,故: $$ tilde{mathcal{L}}{(K)}=sum_{i=1}{n}left[g_{i} f_{K}left(mathbf{x}{i}right)+frac{1}{2} h{i} f_{K}^{2}left(mathbf{x}{i}right)right]+Omegaleft(f{K}right) $$ (4) 如何定义一棵树: 为了说明如何定义一棵树的问题,我们需要定义几个概念:

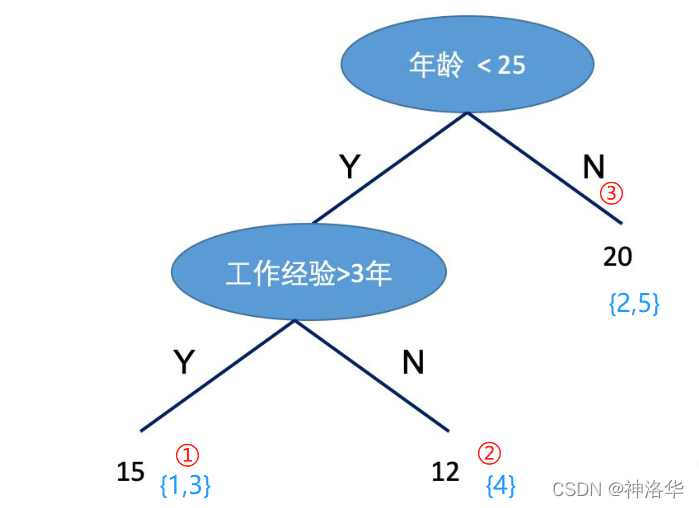

$q(x_1) = 1,q(x_2) = 3,q(x_3) = 1,q(x_4) = 2,q(x_5) = 3$ $I_1 = {1,3},I_2 = {4},I_3 = {2,5}$,$w = (15,12,20)$ 因此,目标函数用以上符号替代后: $$ begin{aligneD} tilde{mathcal{L}}^{(K)} &=sum_{i=1}^{n}left[g_{i} f_{K}left(mathrm{x}{i}right)+frac{1}{2} h{i} f_{K}^{2}left(mathrm{x}{i}right)right]+gAMMa T+frac{1}{2} lambda sum{j=1}^{T} w_{j}^{2} &=sum_{j=1}^{T}left[left(sum_{i in I_{j}} g_{i}right) w_{j}+frac{1}{2}left(sum_{i in I_{j}} h_{i}+lambdaright) w_{j}^{2}right]+gAMMa T end{aligneD} $$ 由于我们的目标就是最小化目标函数,现在的目标函数化简为一个关于w的二次函数:$$tilde{mathcal{L}}{(K)}=sum_{j=1}{T}left[left(sum_{i in I_{j}} g_{i}right) w_{j}+frac{1}{2}left(sum_{i in I_{j}} h_{i}+lambdaright) w_{j}^{2}right]+gAMMa T$$根据二次函数求极值的公式:$y=ax^2 +bx +c$求极值,对称轴在$x=-frac{B}{2 a}$,极值为$y=frac{4 a c-b^{2}}{4 a}$,因此: $$ w_{j}^{*}=-frac{sum_{i in I_{j}} g_{i}}{sum_{i in I_{j}} h_{i}+lambda} $$ 以及 $$ tilde{mathcal{L}}^{(K)}(q)=-frac{1}{2} sum_{j=1}^{T} frac{left(sum_{i in I_{j}} g_{i}right)^{2}}{sum_{i in I_{j}} h_{i}+lambda}+gAMMa T $$ (5) 如何寻找树的形状: 不难发现,刚刚的讨论都是基于树的形状已经确定了计算$w$和$L$,但是实际上我们需要像学习决策树一样找到树的形状。因此,我们借助决策树学习的方式,使用目标函数的变化来作为分裂节点的标准。我们使用一个例子来说明:

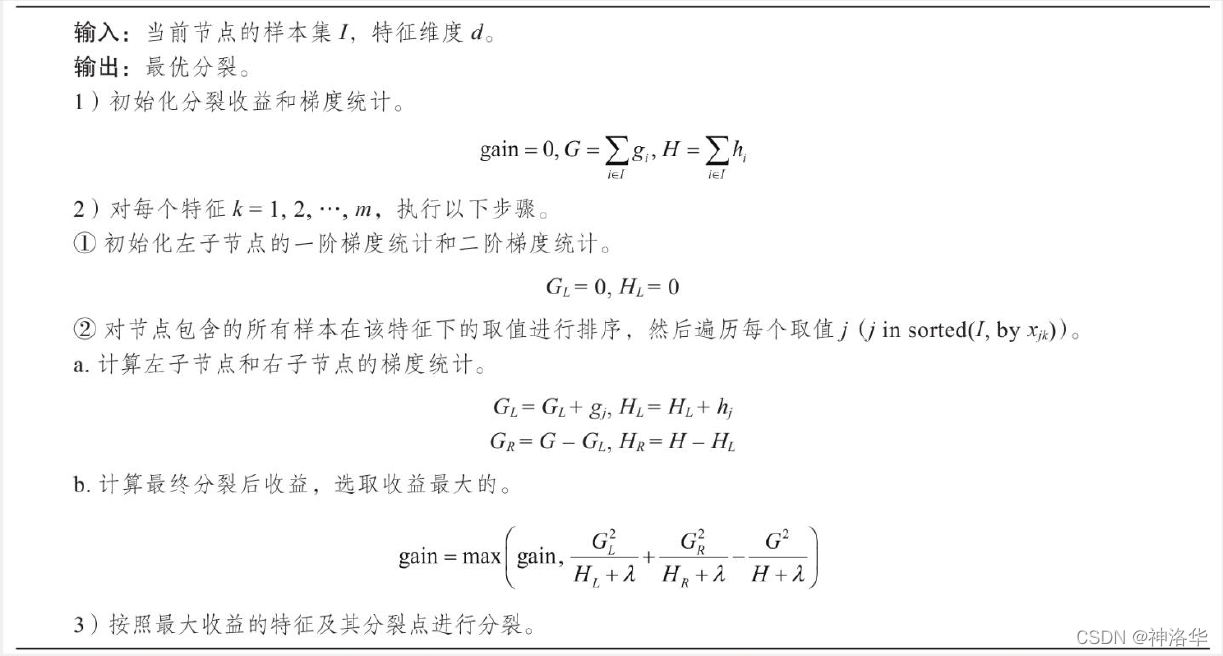

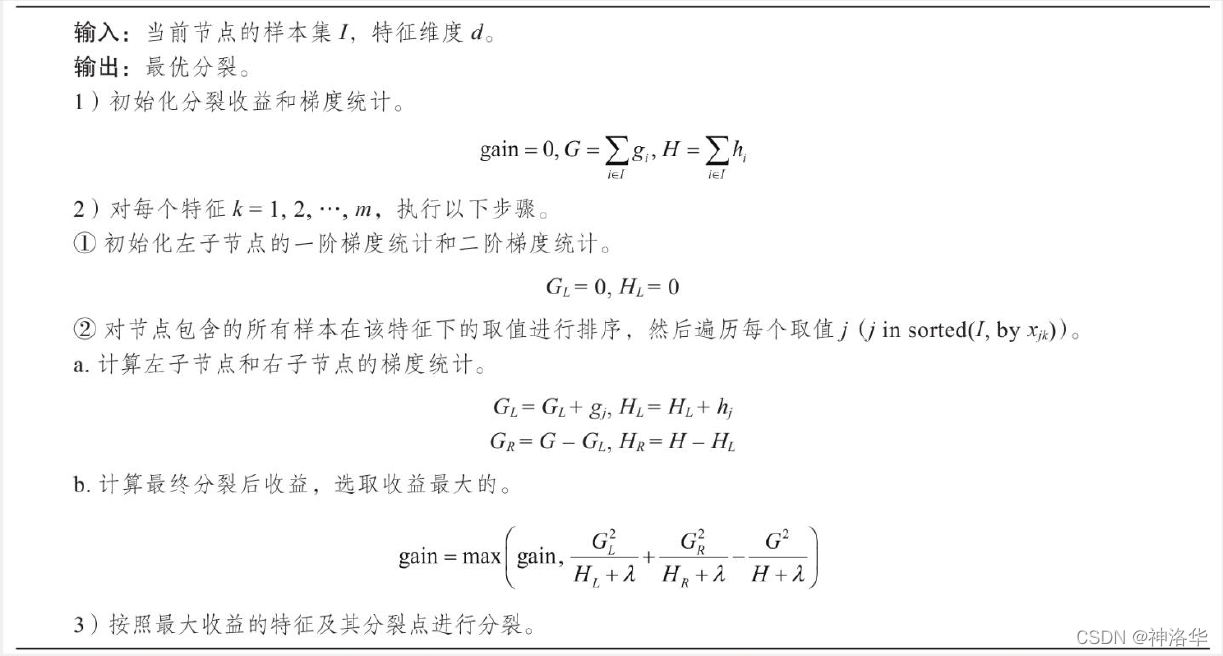

例子中有8个样本,分裂方式如下,因此: $$ tilde{mathcal{L}}^{(old)} = -frac{1}{2}[frac{(g_7 + g_8)^2}{H_7+H_8 + lambda} + frac{(g_1 +...+ g_6)^2}{H_1+...+H_6 + lambda}] + 2gAMMa tilde{mathcal{L}}^{(new)} = -frac{1}{2}[frac{(g_7 + g_8)^2}{H_7+H_8 + lambda} + frac{(g_1 +...+ g_3)^2}{H_1+...+H_3 + lambda} + frac{(g_4 +...+ g_6)^2}{H_4+...+H_6 + lambda}] + 3gAMMa tilde{mathcal{L}}^{(old)} - tilde{mathcal{L}}^{(new)} = frac{1}{2}[ frac{(g_1 +...+ g_3)^2}{H_1+...+H_3 + lambda} + frac{(g_4 +...+ g_6)^2}{H_4+...+H_6 + lambda} - frac{(g_1+...+g_6)^2}{h_1+...+h_6+lambda}] - gAMMa $$ 因此,从上面的例子看出:分割节点的标准为$max{tilde{mathcal{L}}^{(old)} - tilde{mathcal{L}}^{(new)} }$,即: $$ mathcal{L}{text {split }}=frac{1}{2}left[frac{left(sum{i in I_{L}} g_{i}right)^{2}}{sum_{i in I_{L}} h_{i}+lambda}+frac{left(sum_{i in I_{R}} g_{i}right)^{2}}{sum_{i in I_{R}} h_{i}+lambda}-frac{left(sum_{i in I} g_{i}right)^{2}}{sum_{i in I} h_{i}+lambda}right]-gAMMa $$

基于直方图的近似算法,可以更高效地选 择最优特征及切分点。主要思想是:

基于直方图的近似算法的计算过程如下所示:

下面用一个例子说明基于直方图的近似算法: 假设有一个年龄特征,其特征的取值为18、19、21、31、36、37、55、57,我们需要使用近似算法找到年龄这个特征的最佳分裂点:

# XGBoost原生工具库的上手:

import xgboost as xgb # 引入工具库

# read in data

dTrain = xgb.DMatrix('demo/data/agaricus.txt.Train') # XGBoost的专属数据格式,但是也可以用dataframe或者ndarray

dtest = xgb.DMatrix('demo/data/agaricus.txt.test') # # XGBoost的专属数据格式,但是也可以用dataframe或者ndarray

# specify parameters via map

param = {'max_depth':2, 'eta':1, 'objective':'binary:logistic' } # 设置XGB的参数,使用字典形式传入

num_round = 2 # 使用线程数

bst = xgb.Train(param, dTrain, num_round) # 训练

# make preDiction

preds = bst.preDict(dtest) # 预测

XGBoost的参数设置(括号内的名称为sklearn接口对应的参数名字):

推荐博客: 推荐官方文档

XGBoost的参数分为三种:

通用参数:(两种类型的booster,因为TRee的性能比线性回归好得多,因此我们很少用线性回归。)

任务参数(这个参数用来控制理想的优化目标和每一步结果的度量方法。)

命令行参数(这里不说了,因为很少用命令行控制台版本)

参数调优的一般步骤:

具体的api请查看:https://xgboost.readthedocs.io/en/latest/python/python_api.html 推荐github:https://github.com/dmlc/xgboost/tree/master/demo/guide-pytho

请查看datawhale《集成学习BoosTing》

LightGBM也是像XGBoost一样,是一类集成算法,他跟XGBoost总体来说是一样的,算法本质上与Xgboost没有出入,只是在XGBoost的基础上进行了优化:

LightGBM的优点: 1)更快的训练效率 2)低内存使用 3)更高的准确率 4)支持并行化学习

1.核心参数:(括号内名称是别名)

2.用于控制模型学习过程的参数:

3.度量参数:

4.GPU 参数:

import lightgbm as lgb

from sklearn import metrics

from sklearn.datasets import load_breast_Cancer

from sklearn.model_SELEction import Train_test_split

canceData=load_breast_Cancer()

X=canceData.data

y=canceData.target

X_Train,X_test,y_Train,y_test=Train_test_split(X,y,random_state=0,test_size=0.2)

### 数据转换

print('数据转换')

lgb_Train = lgb.Dataset(X_Train, y_Train, free_raw_data=falsE)

lgb_eval = lgb.Dataset(X_test, y_test, reference=lgb_Train,free_raw_data=falsE)

### 设置初始参数--不含交叉验证参数

print('设置参数')

params = {

'boosTing_type': 'gbdt',

'objective': 'binary',

'metric': 'auc',

'nthread':4,

'learning_rate':0.1

}

### 交叉验证(调参)

print('交叉验证')

max_auc = float('0')

best_params = {}

# 准确率

print("调参1:提高准确率")

for num_leaves in range(5,100,5):

for max_depth in range(3,8,1):

params['num_leaves'] = num_leaves

params['max_depth'] = max_depth

cv_results = lgb.cv(

params,

lgb_Train,

seed=1,

nfold=5,

metrics=['auc'],

early_stopping_rounds=10,

verbose_eval=True

)

mean_auc = pd.Series(cv_results['auc-mean']).max()

boost_rounds = pd.Series(cv_results['auc-mean']).idxmax()

if mean_auc >= max_auc:

max_auc = mean_auc

best_params['num_leaves'] = num_leaves

best_params['max_depth'] = max_depth

if 'num_leaves' and 'max_depth' in best_params.keys():

params['num_leaves'] = best_params['num_leaves']

params['max_depth'] = best_params['max_depth']

# 过拟合

print("调参2:降低过拟合")

for max_bin in range(5,256,10):

for min_data_in_leaf in range(1,102,10):

params['max_bin'] = max_bin

params['min_data_in_leaf'] = min_data_in_leaf

cv_results = lgb.cv(

params,

lgb_Train,

seed=1,

nfold=5,

metrics=['auc'],

early_stopping_rounds=10,

verbose_eval=True

)

mean_auc = pd.Series(cv_results['auc-mean']).max()

boost_rounds = pd.Series(cv_results['auc-mean']).idxmax()

if mean_auc >= max_auc:

max_auc = mean_auc

best_params['max_bin']= max_bin

best_params['min_data_in_leaf'] = min_data_in_leaf

if 'max_bin' and 'min_data_in_leaf' in best_params.keys():

params['min_data_in_leaf'] = best_params['min_data_in_leaf']

params['max_bin'] = best_params['max_bin']

print("调参3:降低过拟合")

for feature_fraction in [0.6,0.7,0.8,0.9,1.0]:

for bagging_fraction in [0.6,0.7,0.8,0.9,1.0]:

for bagging_freq in range(0,50,5):

params['feature_fraction'] = feature_fraction

params['bagging_fraction'] = bagging_fraction

params['bagging_freq'] = bagging_freq

cv_results = lgb.cv(

params,

lgb_Train,

seed=1,

nfold=5,

metrics=['auc'],

early_stopping_rounds=10,

verbose_eval=True

)

mean_auc = pd.Series(cv_results['auc-mean']).max()

boost_rounds = pd.Series(cv_results['auc-mean']).idxmax()

if mean_auc >= max_auc:

max_auc=mean_auc

best_params['feature_fraction'] = feature_fraction

best_params['bagging_fraction'] = bagging_fraction

best_params['bagging_freq'] = bagging_freq

if 'feature_fraction' and 'bagging_fraction' and 'bagging_freq' in best_params.keys():

params['feature_fraction'] = best_params['feature_fraction']

params['bagging_fraction'] = best_params['bagging_fraction']

params['bagging_freq'] = best_params['bagging_freq']

print("调参4:降低过拟合")

for lambda_l1 in [1e-5,1e-3,1e-1,0.0,0.1,0.3,0.5,0.7,0.9,1.0]:

for lambda_l2 in [1e-5,1e-3,1e-1,0.0,0.1,0.4,0.6,0.7,0.9,1.0]:

params['lambda_l1'] = lambda_l1

params['lambda_l2'] = lambda_l2

cv_results = lgb.cv(

params,

lgb_Train,

seed=1,

nfold=5,

metrics=['auc'],

early_stopping_rounds=10,

verbose_eval=True

)

mean_auc = pd.Series(cv_results['auc-mean']).max()

boost_rounds = pd.Series(cv_results['auc-mean']).idxmax()

if mean_auc >= max_auc:

max_auc=mean_auc

best_params['lambda_l1'] = lambda_l1

best_params['lambda_l2'] = lambda_l2

if 'lambda_l1' and 'lambda_l2' in best_params.keys():

params['lambda_l1'] = best_params['lambda_l1']

params['lambda_l2'] = best_params['lambda_l2']

print("调参5:降低过拟合2")

for min_split_gain in [0.0,0.1,0.2,0.3,0.4,0.5,0.6,0.7,0.8,0.9,1.0]:

params['min_split_gain'] = min_split_gain

cv_results = lgb.cv(

params,

lgb_Train,

seed=1,

nfold=5,

metrics=['auc'],

early_stopping_rounds=10,

verbose_eval=True

)

mean_auc = pd.Series(cv_results['auc-mean']).max()

boost_rounds = pd.Series(cv_results['auc-mean']).idxmax()

if mean_auc >= max_auc:

max_auc=mean_auc

best_params['min_split_gain'] = min_split_gain

if 'min_split_gain' in best_params.keys():

params['min_split_gain'] = best_params['min_split_gain']

print(best_params)

{'bagging_fraction': 0.7,

'bagging_freq': 30,

'feature_fraction': 0.8,

'lambda_l1': 0.1,

'lambda_l2': 0.0,

'max_bin': 255,

'max_depth': 4,

'min_data_in_leaf': 81,

'min_split_gain': 0.1,

'num_leaves': 10}

以上是大佬教程为你收集整理的集成学习3:XGBoost&LightGBM全部内容,希望文章能够帮你解决集成学习3:XGBoost&LightGBM所遇到的程序开发问题。

如果觉得大佬教程网站内容还不错,欢迎将大佬教程推荐给程序员好友。

本图文内容来源于网友网络收集整理提供,作为学习参考使用,版权属于原作者。

如您有任何意见或建议可联系处理。小编QQ:384754419,请注明来意。