大佬教程收集整理的这篇文章主要介绍了HW2-Logistic regression&Mini-batch gradient descent classfication,大佬教程大佬觉得挺不错的,现在分享给大家,也给大家做个参考。

先按照范例写了用Mini-batch的logistic regression,处理方式和范例有一些区别,因为不太会numpy,只会矩阵向量乘来乘去,不会用广播之类的操作(((

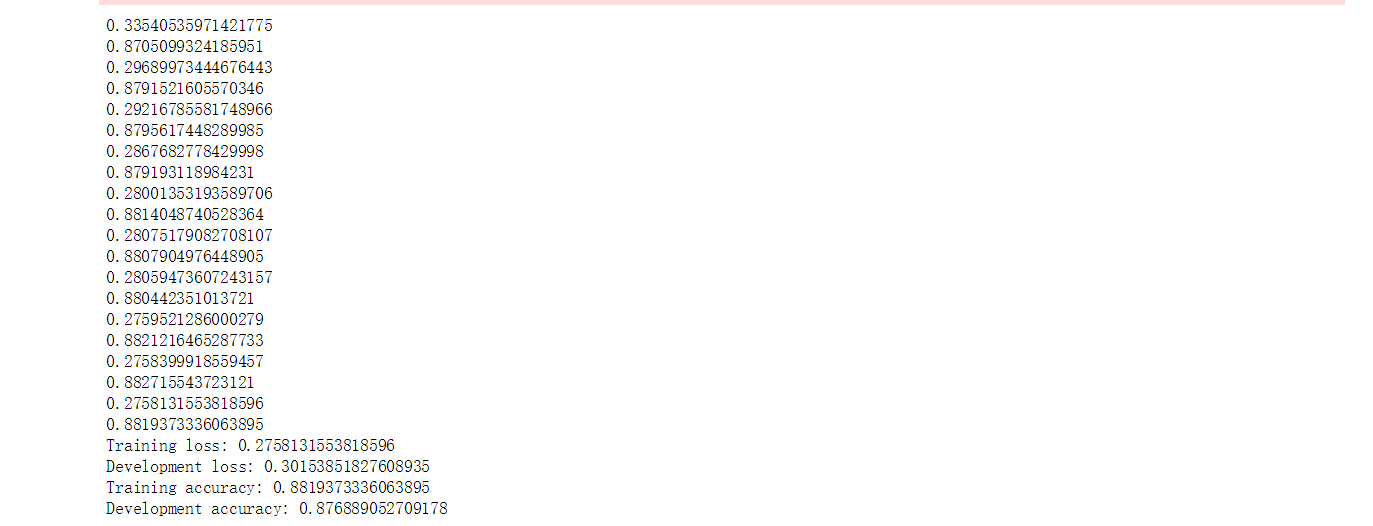

如果仿照范例不做优化的话,在Train_set上跑出来的acc和loss和范例差不多

import numpy as np

with open("./X_Train") as f:

next(f)

X_Train = np.array([line.Strip('n').split(',')[1:] for line in f], dtype = float)

with open("./Y_Train") as f:

next(f)

# 和范例不同,这里读进来的是列向量

Y_Train = np.array([line.Strip('n').split(',')[1:] for line in f], dtype = float)

with open("./X_test") as f:

next(f)

X_test = np.array([line.Strip('n').split(',')[1:] for line in f], dtype = float)

(X_{after} = frac{X - X_{mean}}{sigma})

# 算出Train_set的mean和std

X_mean = np.mean(X_Train[:,range(X_Train.shape[1])], axis = 0).flatten()

X_std = np.std(X_Train[:,range(X_Train.shape[1])], axis = 0).flatten()

# 对X_Train进行Normalize

X_Train[:,range(X_Train.shape[1])] = (X_Train[:,range(X_Train.shape[1])]-X_mean)/(X_std + 1e-8)

# 同理

X_test[:,range(X_test.shape[1])] = (X_test[:,range(X_test.shape[1])]-X_mean)/(X_std+1e-8)

划分出Train_set and development_set

dev_ratio = 0.1 # development_set占比

Train_size = int((1 - dev_ratio) * len(X_Train))

X_dev = X_Train[Train_size:]

Y_dev = Y_Train[Train_size:]

X_Train = X_Train[:Train_size]

Y_Train = Y_Train[:Train_size]

# 洗牌

np.random.seed(0)

def _shuffle(X, Y):

randomize = np.arange(len(X)) # 得到长度为len(X)的多个0的permutation

np.random.shuffle(randomizE) # 将排列打乱

return X[randomize], Y[randomize] # 把X和Y用这个排列作为索引打乱

# sigmoid函数

def _sigmoid(z):

return np.clip(1 / (1.0 + np.exp(-z)), 1e-8, 1-(1e-8)) # 规定作为边界最大值和最小值,超过限度的值都会取边界

# 得到一组x和w logistic回归的结果

# X: input data

# w: weight vector

# b: bais

def _f(X, w, b):

return _sigmoid(np.dot(X, w) + b)

# 得到二分类结果

def _preDict(X, w, b):

return np.round(_f(X, w, b)).astype(np.int)

# acc

def _accuracy(Y_pred, Y_label):

return 1 - np.mean(np.abs(Y_pred - Y_label))

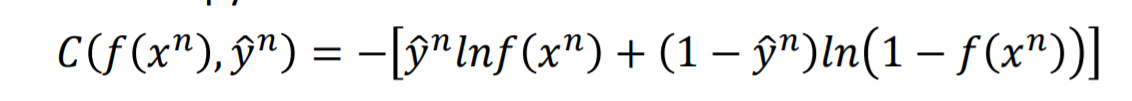

# 交叉熵 = - yhat * ln(f(x_n)) - (1 - yhat) * ln(1 - f(x_n))

# 将cross_entropy求和得到损失

def _cross_entropy_loss(Y_pred, Y_label):

cross_entropy = -np.dot(Y_label.transpose(), np.log(Y_pred)) - np.dot(1 - Y_label.transpose(), np.log(1 - Y_pred))

#print(cross_entropy)

return cross_entropy[0][0]

# 梯度 w_grad = -sigma {(yhat - f(x_n)) * X.T}, b_grad = -sigma {(yhat - f(x_n))}

def _gradient(X, Y_label, w, b):

#print(Y_label.flatten())

#print(_f(X, w, b).flatten())

pred_error = (Y_label.flatten() - _f(X, w, b).flatten()) # 得到行向量

#print(Y_pred)

#print(Y_label)

#print(pred_error)

#print(pred_error.shapE)

#print(X)

#print(np.sum(np.dot(pred_error, X)))

w_grad = -np.dot(pred_error, X) # 让它对X的每一维求内积得到行向量w_grad

b_grad = -np.sum(pred_error) # 对b求微分的结果就是pred_error求和

#print(w_grad.shapE)

return w_grad.reshape(X_Train.shape[1],1), b_grad # 返回列向量

# 初始化参数

w = np.zeros((X_Train.shape[1],1))

b = np.zeros((1,))

print(w.shapE)

print(b)

max_iter = 10 # 迭代次数

batch_size = 10 # mini-batch每次选取的size

learning_rate = 0.25

Train_loss = []

dev_loss = []

Train_acc = []

dev_acc = []

cnt = 1 # 下降的步数,用于每一步后调整学习率

for epoch in range(max_iter):

X_Train, Y_Train = _shuffle(X_Train, Y_Train)

# Mini-batch Training

for idx in range(Train_size//batch_sizE):

X = X_Train[idx*batch_size:(idx+1)*batch_size]

Y = Y_Train[idx*batch_size:(idx+1)*batch_size]

#print(X.shapE)

#print(Y.shapE)

# 计算梯度

w_grad, b_grad = _gradient(X, Y, w, b)

w = w - learning_rate/np.sqrt(cnt) * w_grad

#print(w)

b = b - learning_rate/np.sqrt(cnt) * b_grad

cnt += 1

#print(cnt)

# 计算acc和平均loss

Y_Train_pred = np.round(_f(X_Train, w, b))

Train_acc.append(_accuracy(Y_Train_pred, Y_Train))

#print(_cross_entropy_loss(_f(X_Train, w, b), Y_Train))

#print("nn")

Train_loss.append(_cross_entropy_loss(_f(X_Train, w, b), Y_Train)/len(X_Train))

Y_dev_pred = np.round(_f(X_dev, w, b))

dev_acc.append(_accuracy(Y_dev_pred, Y_dev))

dev_loss.append(_cross_entropy_loss(_f(X_dev, w, b), Y_dev)/len(X_dev))

print(Train_loss[-1])

#print("n")

print(Train_acc[-1])

print('Training loss: {}'.format(Train_loss[-1]))

print('Development loss: {}'.format(dev_loss[-1]))

print('Training accuracy: {}'.format(Train_acc[-1]))

print('Development accuracy: {}'.format(dev_acc[-1]))

以上是大佬教程为你收集整理的HW2-Logistic regression&Mini-batch gradient descent classfication全部内容,希望文章能够帮你解决HW2-Logistic regression&Mini-batch gradient descent classfication所遇到的程序开发问题。

如果觉得大佬教程网站内容还不错,欢迎将大佬教程推荐给程序员好友。

本图文内容来源于网友网络收集整理提供,作为学习参考使用,版权属于原作者。

如您有任何意见或建议可联系处理。小编QQ:384754419,请注明来意。